Executive Summary

The AI Trust Index measures how legal professionals are actually experiencing AI today, tracking confidence, usage, barriers, and organizational readiness. The findings reveal a profession that is actively using AI, but doing so with caution and clear conditions.

Headline findings

- AI usage is widespread, embedded in everyday legal tasks across roles and organizations.

- Trust is conditional: most legal professionals are only somewhat confident in AI accuracy and continue to verify outputs.

- Confidence is rising year over year, even amid public AI failures, court scrutiny, and regulatory attention.

- Fragmented systems are the root trust problem. Disconnected data, documents, and workflows undermine confidence and limit AI’s impact.

Legal teams want AI—but they only trust it when it is grounded in their own data, documents, and workflows. Trust does not come from general-purpose tools operating in isolation. It comes from AI that understands the full context of legal work and operates on a unified source of truth.

Research methodology

This report is based on an online survey of 115 legal professionals, conducted by Researchscape International, an independent market-research consultancy. The survey was fielded October 22–28, 2025, with respondents from the United States (91%) and Canada (9%).

The respondents work in law firms, government agencies, and corporate legal teams. They were distributed across a variety of roles, from partner to paralegal, and operate in a broad variety of legal practice areas. Results were not weighted.

Because this was not a probability-based sample, a traditional margin of error does not apply. The estimated credibility interval is ±13 percentage points for questions answered by all respondents, with larger intervals for smaller subsamples. Researchscape applied rigorous data-quality controls to minimize sampling and non-sampling error.

Introduction: Why AI Trust Matters in Law

AI is already part of legal work. But in law, getting it wrong carries real consequences. Errors don’t just affect efficiency; they impact reputations, ethical obligations, client outcomes, and regulatory compliance.

Today’s legal teams are often working across fragmented tools and disconnected data, forcing critical decisions to be made without a complete or consistent picture. When intelligence is scattered, confidence erodes, which in turn makes it harder to trust both the technology and the outcomes it produces.

As a result, while legal professionals are experimenting with AI at unprecedented levels, reliance remains cautious. This trust gap reflects the reality of a high-risk profession operating under intense scrutiny from courts, regulators, and clients alike.

The survey results suggest that in law, lasting AI adoption doesn’t hinge on speed or novelty. It hinges on trust—trust built through systems that are accurate, accountable, and connected enough to support sound judgment.

CHAPTER 1

The State of AI Trust in Law

Do legal professionals trust AI today?

The short answer: cautiously, and increasingly so.

This research shows that legal professionals are engaging with AI in real work. But trust remains conditional. Most legal professionals express some confidence in AI, yet only a small minority feel fully assured.

This chapter summarizes how legal professionals feel about AI today: how much they trust it, how that trust is changing, and where hesitation remains.

At a high level, three themes emerge from the data that follows:

- Lawyers are increasingly experimenting with AI.

- Confidence is growing—but slowly. Experience and familiarity are improving perceptions, even amid public AI failures and increased scrutiny.

- Verification remains the norm. AI is treated as an assistant, not an authority.

- Trust is conditional, fragile, and closely tied to how—and where—AI is embedded in legal workflows.

What follows is a closer look at how legal professionals assess AI today, beginning with their confidence in AI accuracy and personal use.

Overall confidence: cautious but present

Most legal professionals report at least some level of confidence in AI, especially when it comes to accuracy.

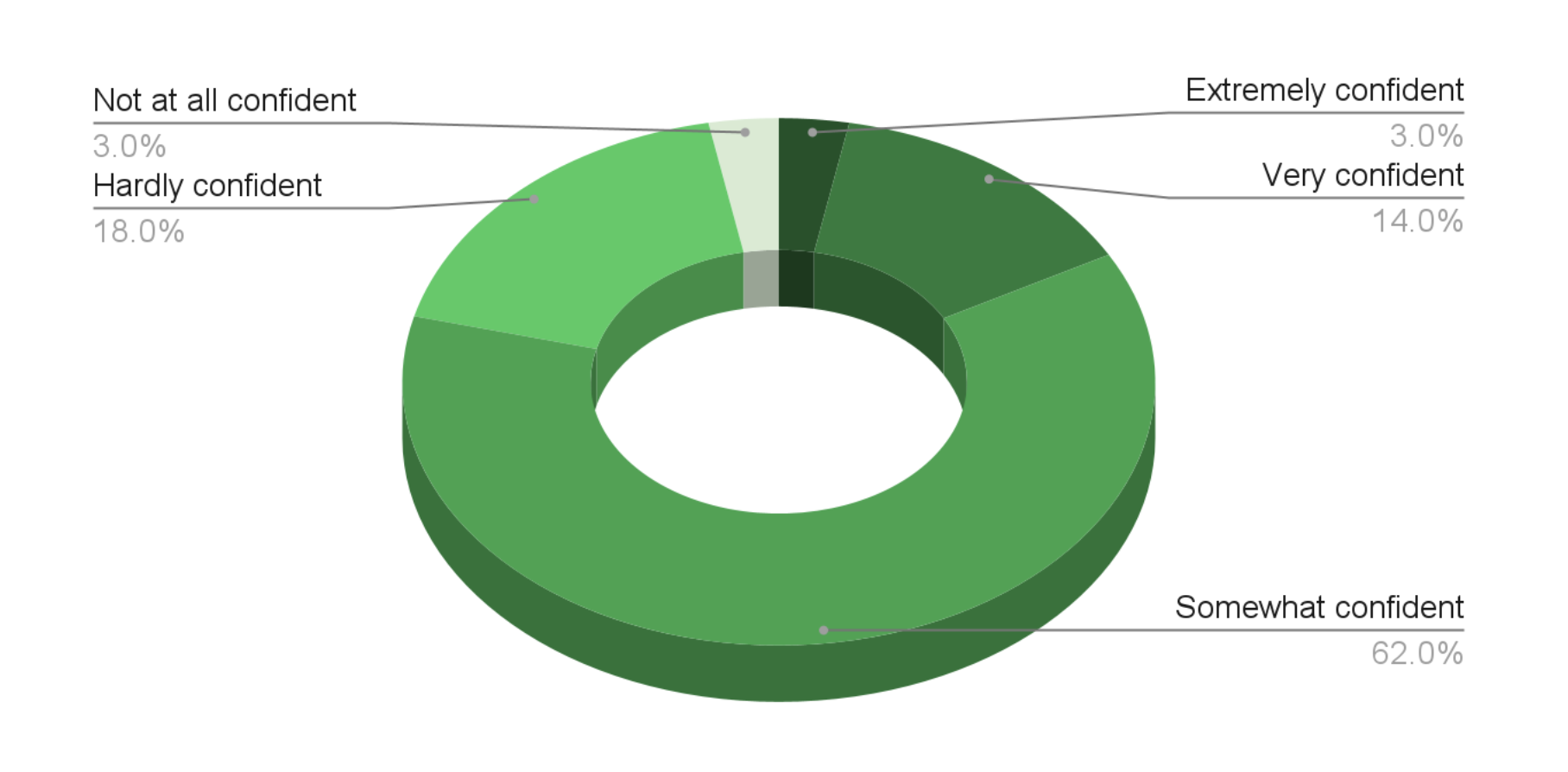

Confidence in AI accuracy:

➡ Nearly 80% express at least some confidence, but only a small fraction feel fully assured.

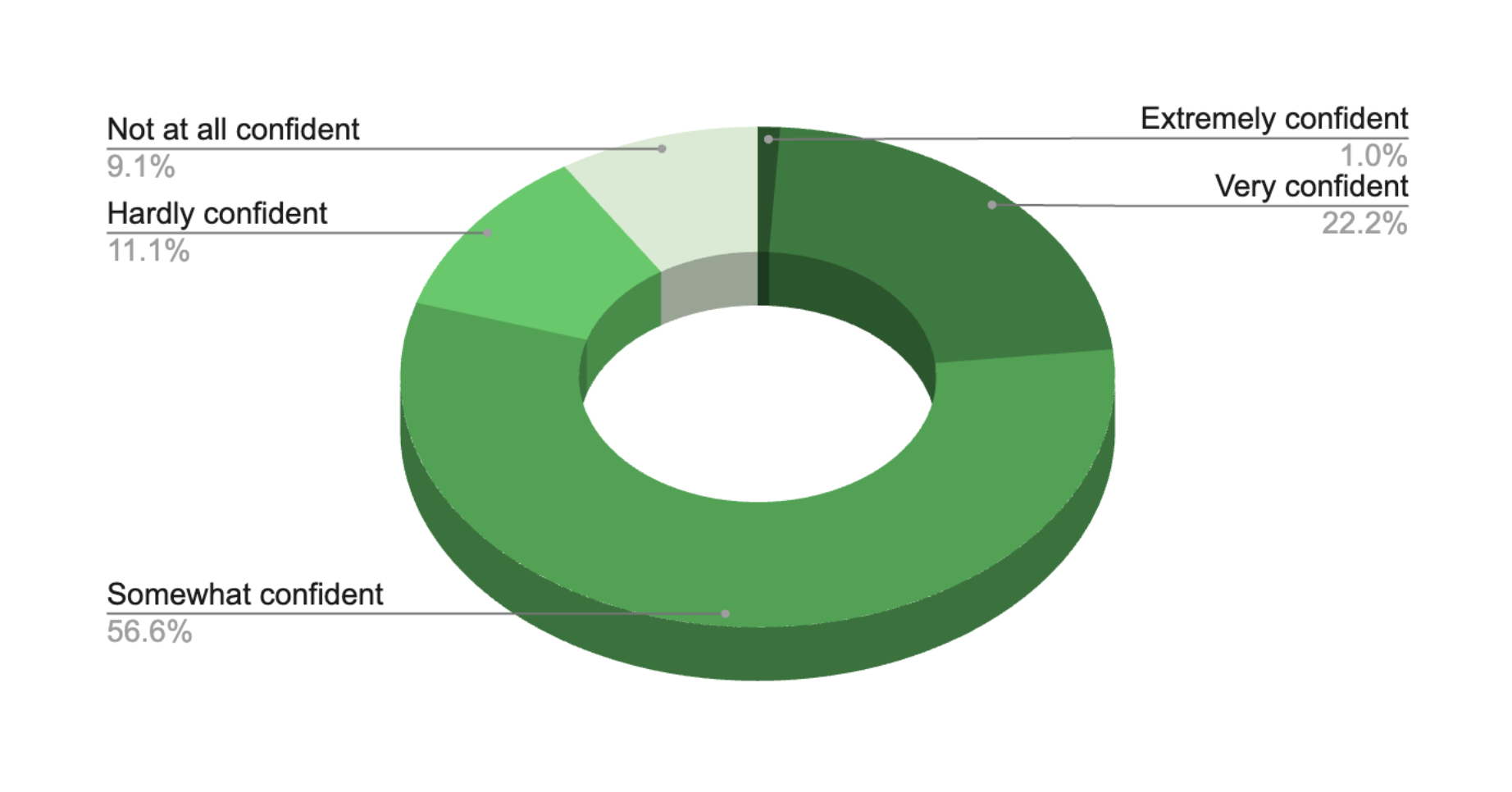

Confidence in personal use mirrors accuracy concerns

Legal professionals are slightly more confident in using AI than in trusting its outputs outright.

Confidence using AI for legal work:

This gap reflects a common pattern:Lawyers are comfortable experimenting, but still verify.

Confidence is trending upward

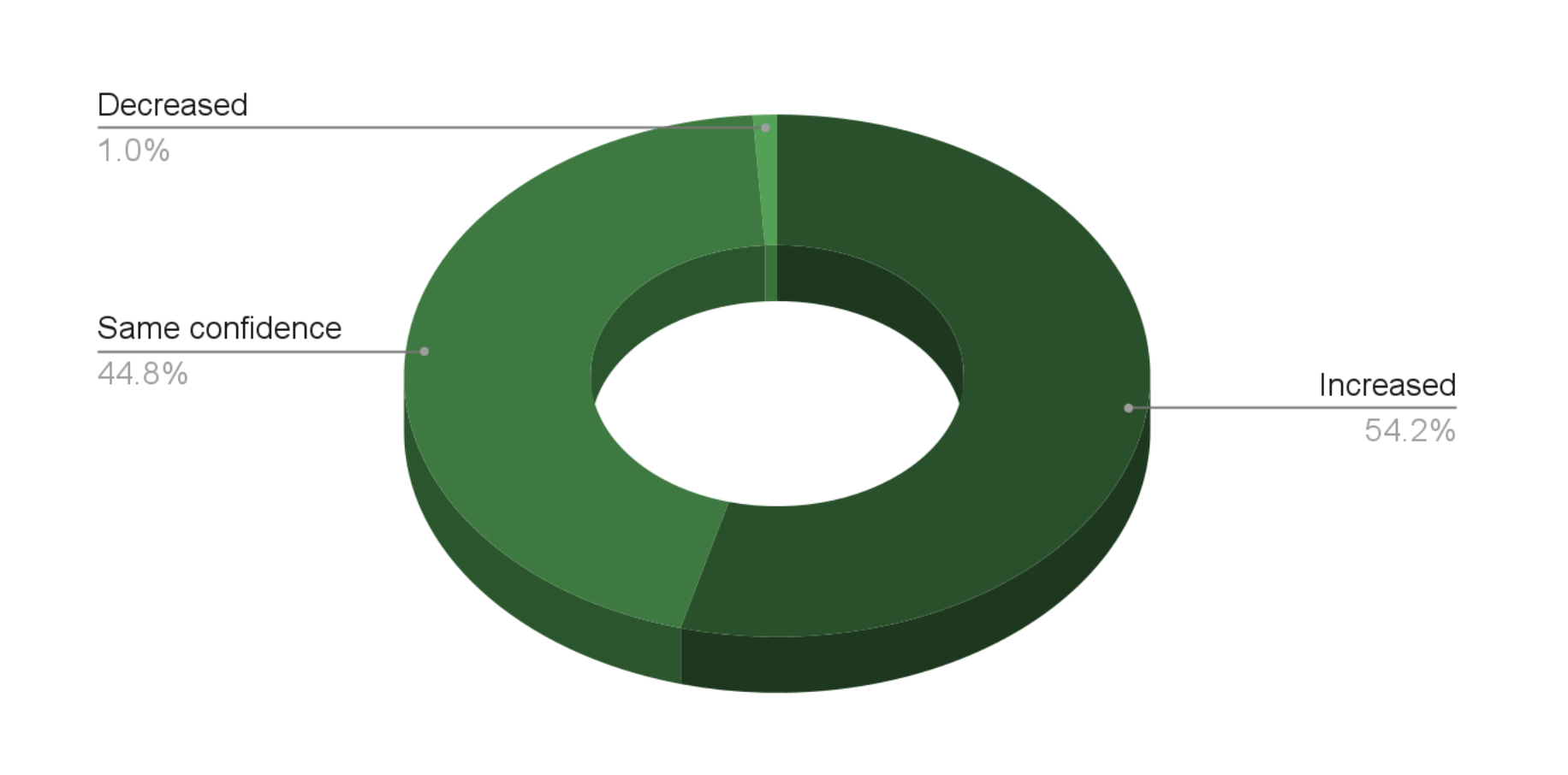

Among the legal professionals surveyed, trust in AI is increasing year over year, with over half reporting increased confidence and only 1% reporting decreased confidence.

Change in confidence over the past year:

Based on the open-ended answers provided by respondents, this growth in confidence is driven by:

- Greater familiarity.

- Better understanding of limitations.

- More realistic expectations.

CHAPTER 2

How AI Is Used Today in Law

AI is already part of daily legal work

AI adoption in law is no longer theoretical. Our survey shows AI usage in everyday tasks across roles, firms, and departments.

However, its role is usually narrow, cautious, and largely reactive.

Today, legal professionals primarily use AI in low-risk situations where the boundaries are clear and the consequences of error are limited. In most cases, the user supplies the data and asks AI to perform a contained task: summarize documents, locate information, draft routine communications, or organize materials. These prompts deliver real value, saving time and reducing administrative drag without replacing legal judgment.

This pattern reflects how trust is currently earned in law. AI is most accepted when it operates under direct human control and within a clearly defined scope. Lawyers are comfortable asking AI to process what they already know, but far less comfortable asking it to infer, connect, or decide.

As a result, much of today’s AI usage in law is reactive. AI responds to prompts rather than anticipating needs. It works on isolated inputs rather than across matters. It assists with individual tasks, but rarely supports broader legal decision-making or operational insight.

While a conservative approach is practical—and often necessary—it also highlights a growing gap between AI adoption in legal as compared to other industries. In a mature technology environment, AI is embedded into systems of record, allowing broad analysis of connected data. This is not just fast intelligence, but complete intelligence to surface risks, identify patterns, and support decisions in real time.

The next evolution, Agentic AI, will not be limited by disconnected tasks and partial context. Agentic AI will be able to proactively manage routine tasks like document review, deadline tracking, and case insights, allowing teams more time to focus on strategy, client advocacy, and higher-value legal work.

The sections that follow examine where AI is used most today, who is using it, and what benefits legal professionals are already seeing. Together, they also reveal the boundaries of today’s approach—and hint at the opportunity that emerges when intelligence is connected rather than fragmented.

Where AI shows up first: research and documents

AI adoption is strongest where legal work is text-heavy and time-intensive. Our survey shows the top uses cases for AI in law are:

- Legal research

- Document review and analysis

- Document management and assembly

- Workflow automation

- Contract analysis and review

- Knowledge management.

These “beachhead” use cases share a common theme: high volume, repeatable work where speed matters.

Workflow and operational uses like billing, intake, and predictive analytics lag behind.

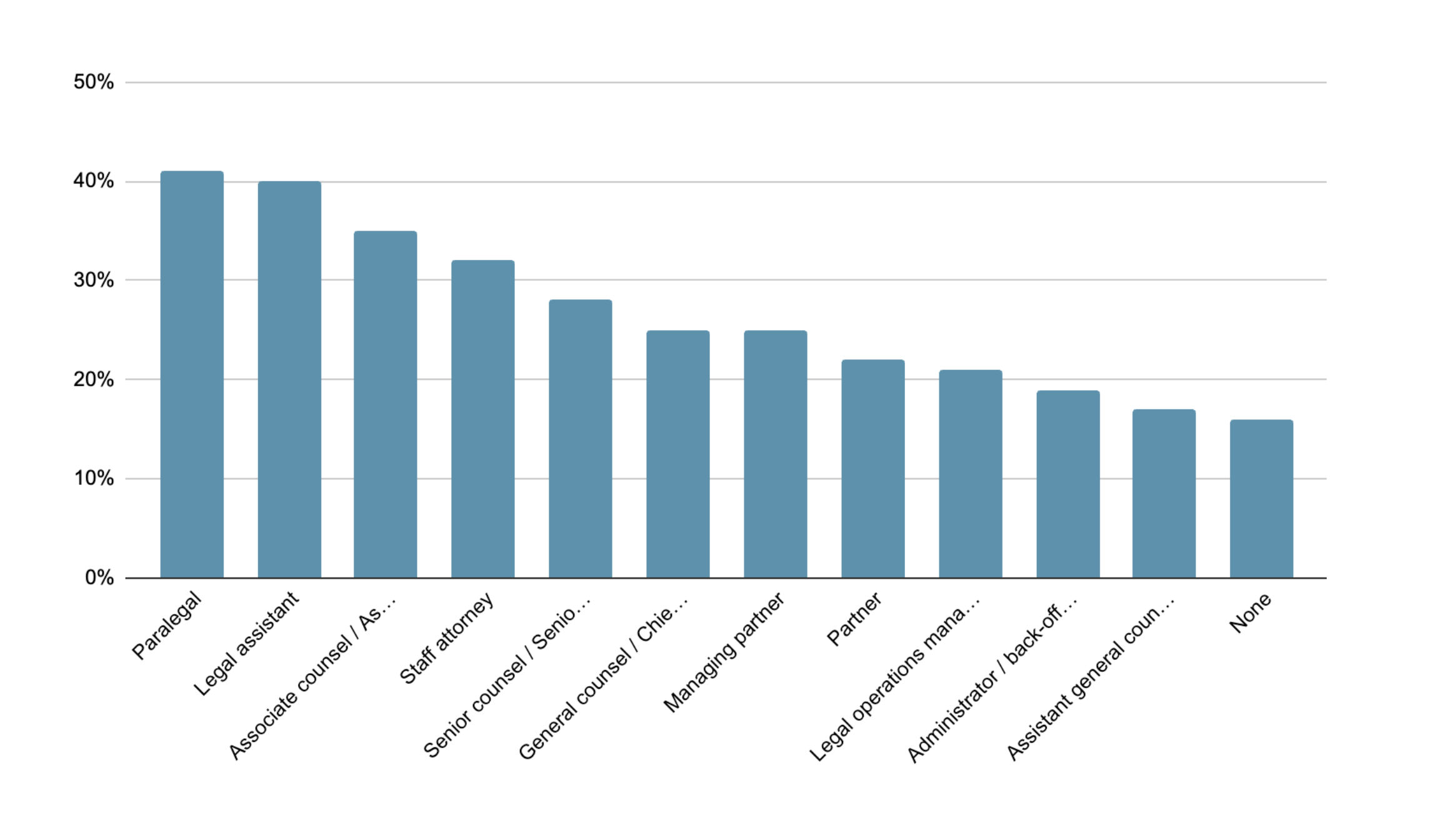

AI is used across the organization

AI usage spans nearly every level of the legal organization, from managing partner to paralegal. But the highest current usage appears to be among staff and junior attorneys.

What levels of the organization are using AI?

What lawyers actually ask AI to do

Our survey shows AI is most trusted when it helps legal professionals deal with high volumes of information, rather than proactively navigate complexity.

Most common AI prompts include:

- Locate key prior case documents or memos

- Summarize internal notes, correspondence, or case status

- Draft client updates and routine communications

- Organize documents by topic, provider, or date

- Identify deadlines, events, and next steps

Below are some example prompts. Which are most typical of how you use AI?

| 33% | Locate key prior case documents or memos related to this issue. |

| 33% | Locate key prior case documents or memos related to this issue. |

| 33% | Locate key prior case documents or memos related to this issue. |

| 29% | Summarize the case and its current status, including recent activity and next steps. |

| 27% | Draft a client update message summarizing recent case progress. |

| 27% | Summarize the key points and inconsistencies in the deposition transcripts. |

| 26% | List all upcoming events, deadlines, and procedural dates for this case. |

| 24% | Draft and assemble a demand letter or representation notice for this case. |

| 20% | Generate deposition questions based on this expert report. |

| 19% | Calculate total hours worked and disbursements on this case. |

| 15% | Summarize billing entries and outstanding balances for this client. |

These prompts reflect a clear pattern: AI is used to create clarity, not conclusions. It’s most often used in lower-risk contexts for one-off tasks.

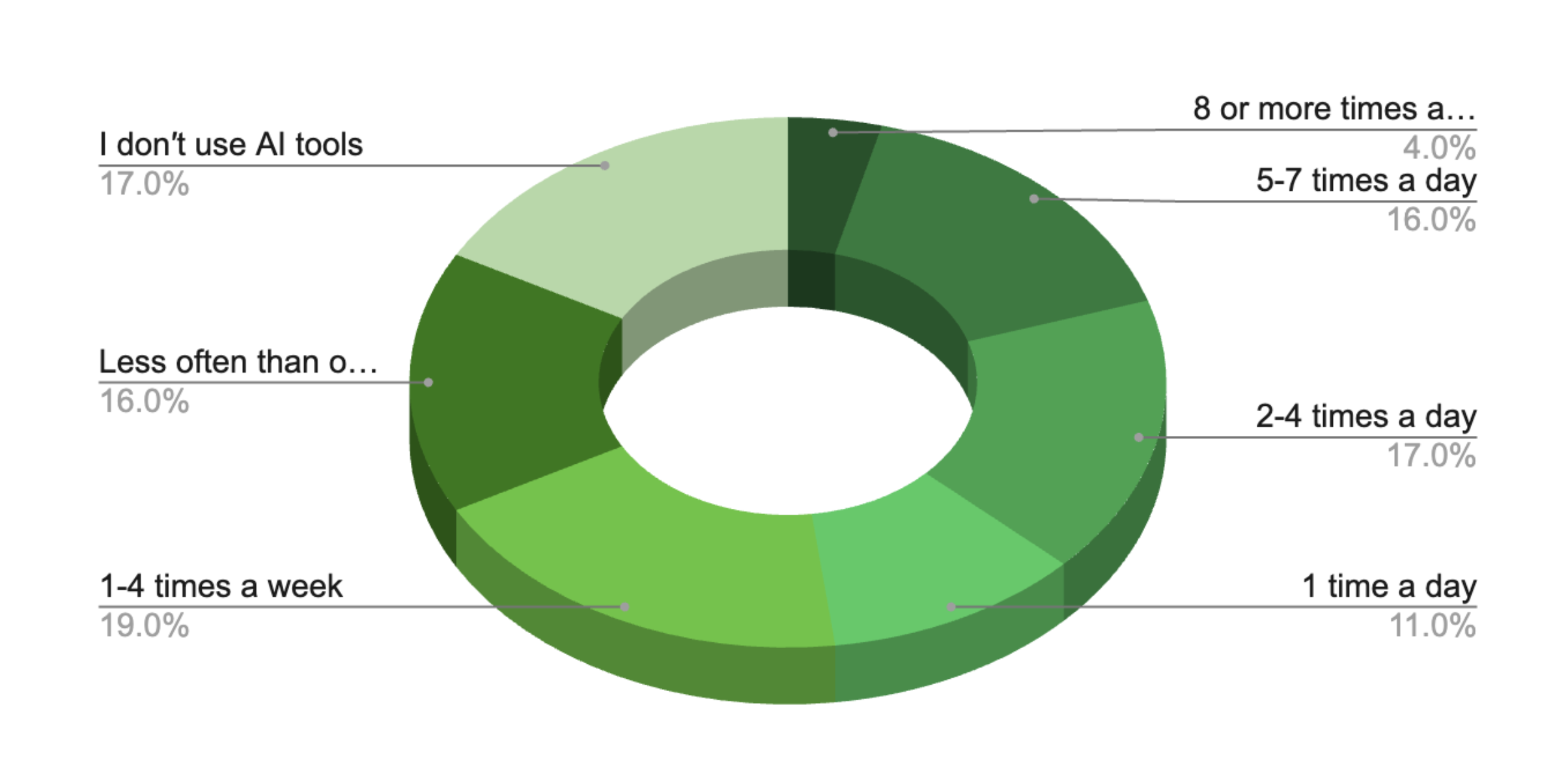

Frequency: AI is now a routine part of legal work

Our respondents showed that AI usage is consistent, but not overwhelming:

- About half use AI daily.

- Others use it multiple times per week.

- A meaningful minority still don’t use AI at all.

About how many times a day or week do you use AI at work?

This reflects a cautious but growing integration of AI into legal workflows.

Organizations also show an increased willingness to invest in AI. 64% of respondents report that their organizations are investing in AI tools.

How does AI fit in with your organization′s other technology investments?

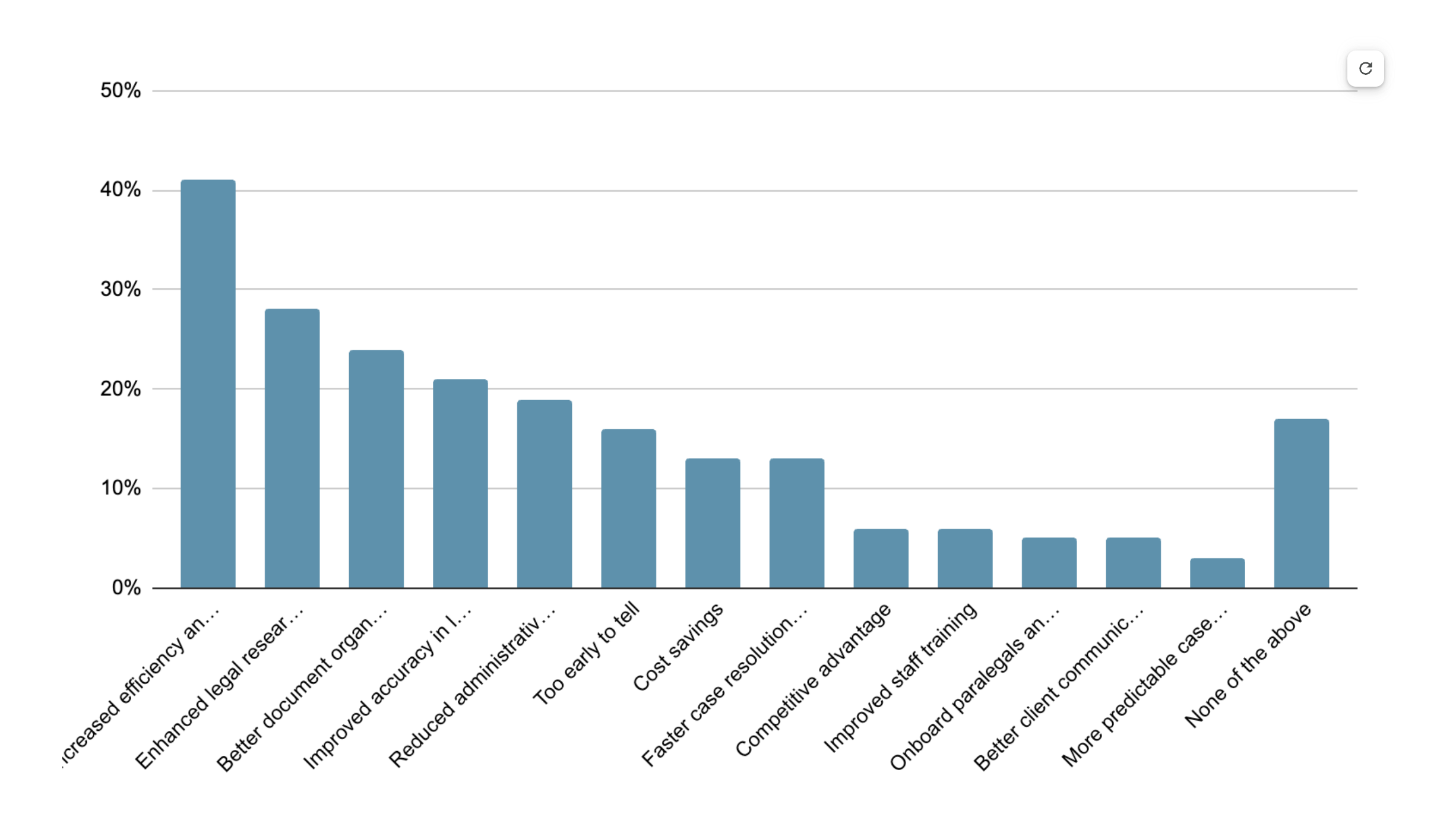

The ROI is real and measurable

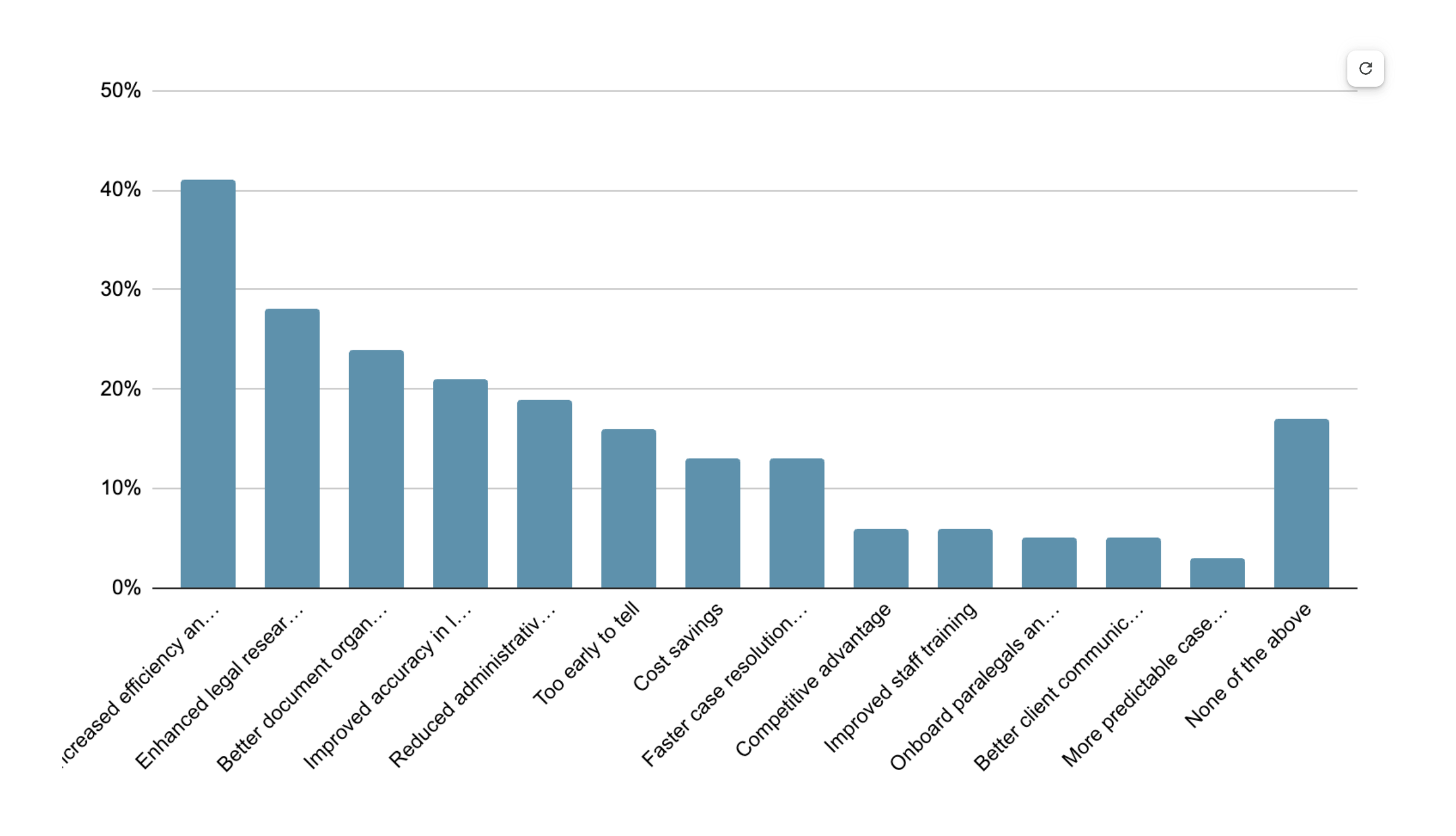

Respondents reported that AI delivers tangible value today.

Top benefits experienced:

- Increased efficiency and productivity

- Stronger legal research

- Better document organization

- Improved accuracy

For the AI tools your organization currently uses, what benefits have you experienced?

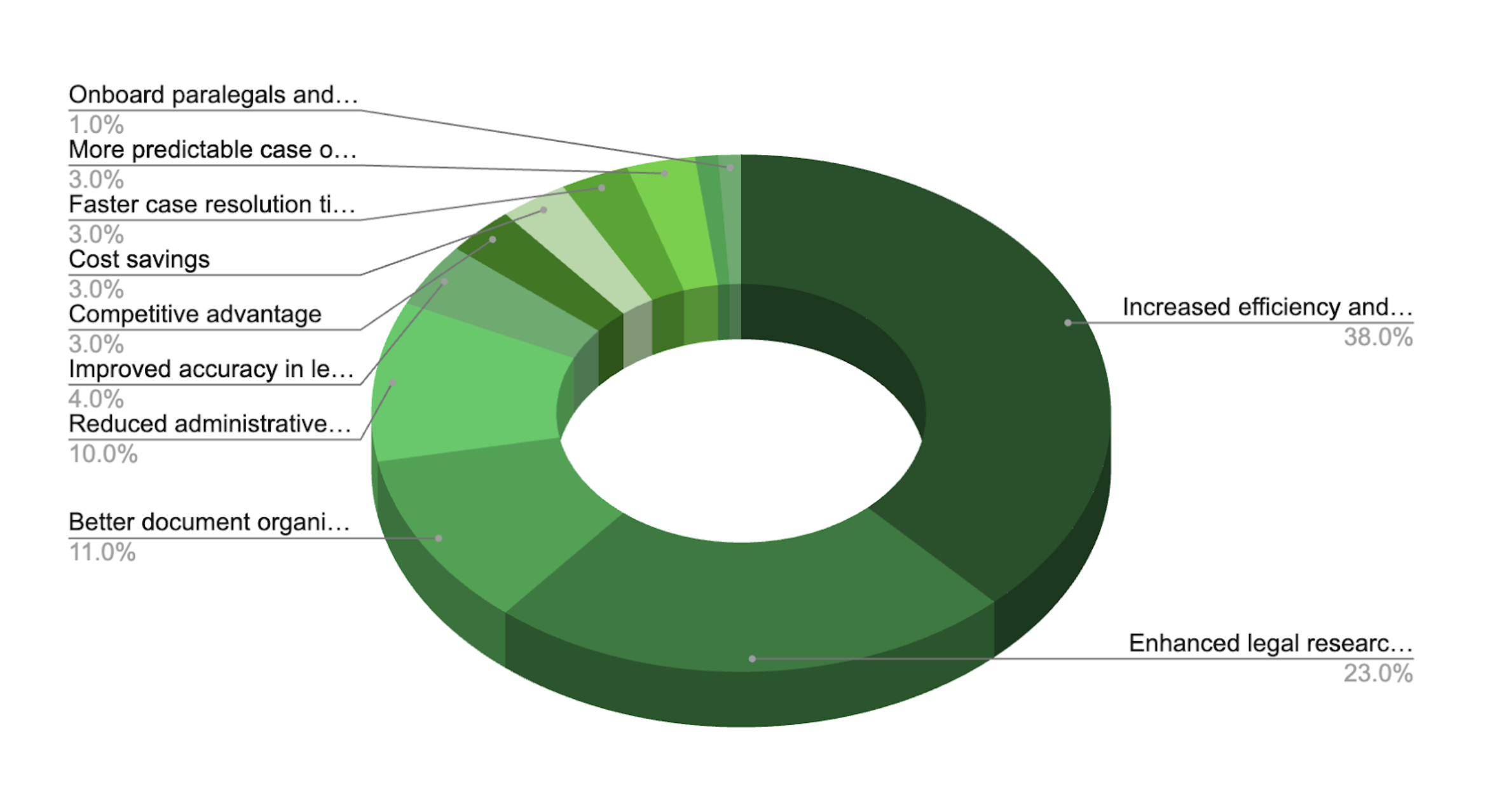

When asked to choose just one benefit:

- 38% say productivity gains are the single biggest benefit

What′s the single biggest benefit you′ve experienced?

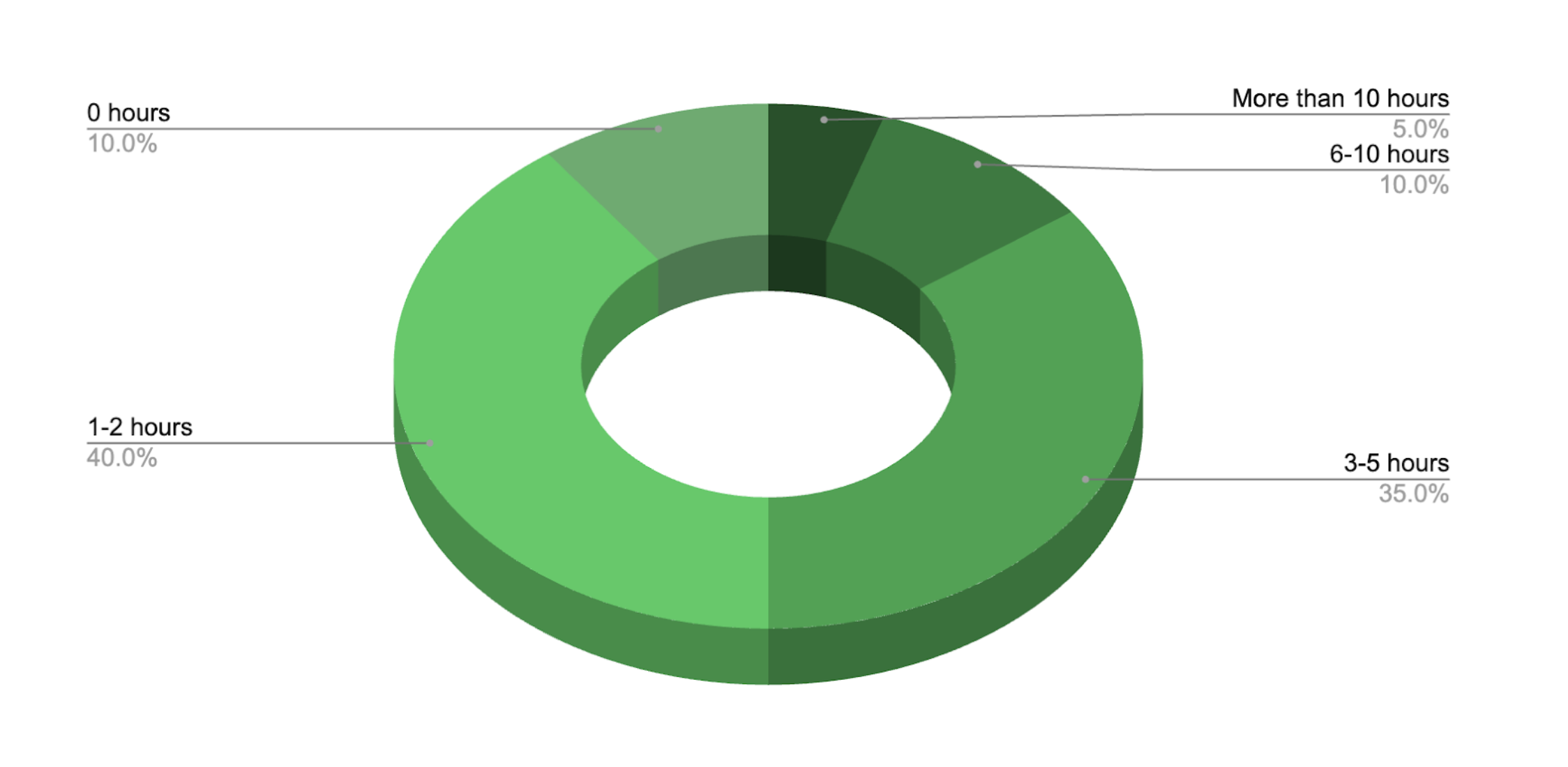

Time saved per week:

Greater efficiency is resulting in measurable time savings.

- 75% save 1–5 hours each week.

- 14% save more than 5 hours.

Approximately how many hours per week does AI technology save you personally in your legal work?

These gains may be incremental, but they are consistent and measurable.

Career impact: neutral today, promising tomorrow

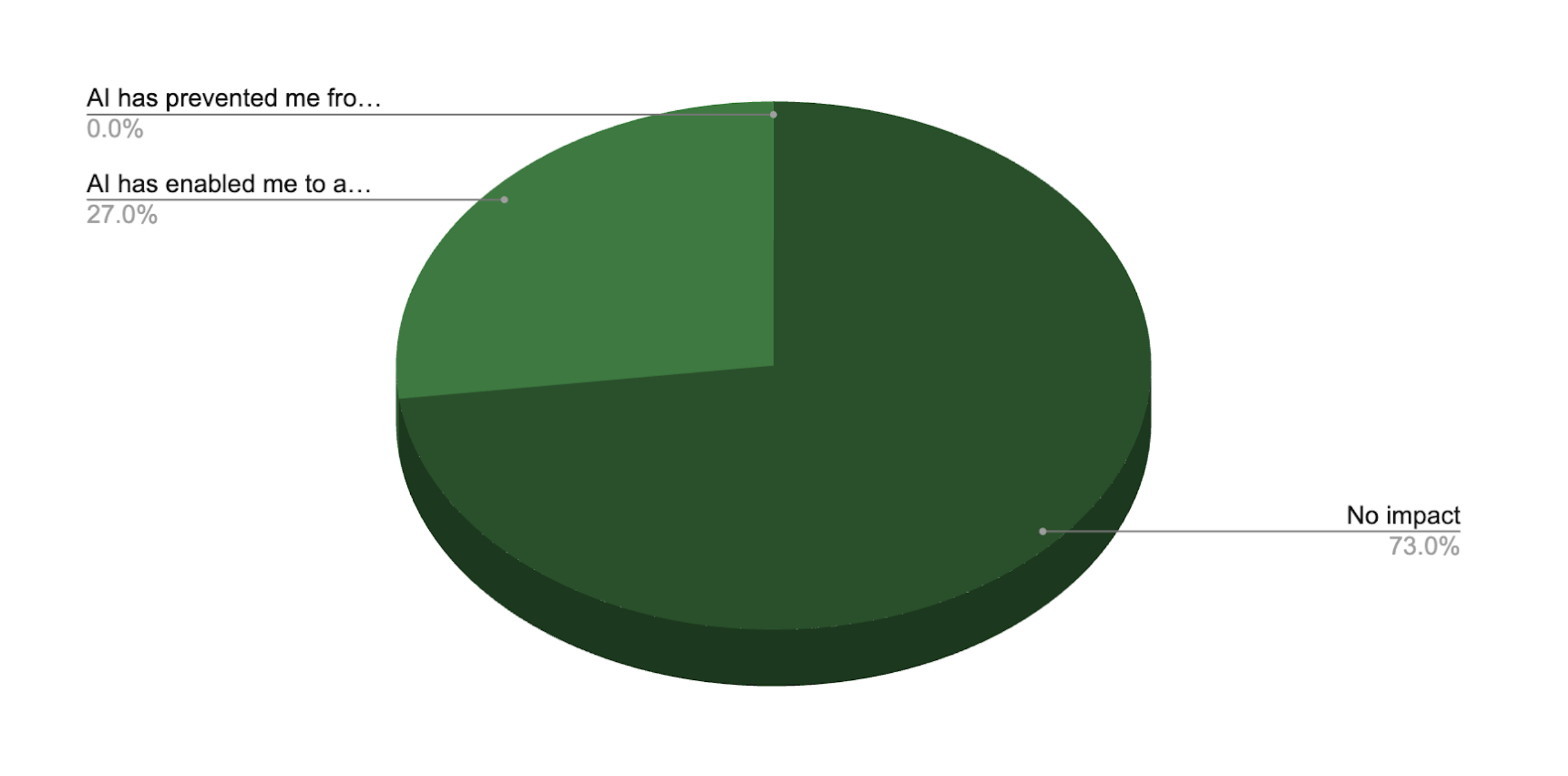

The majority of respondents say that AI has made no impact on their career progression. But 27% report that these tools have helped them advance faster. No respondents answered that AI has been an obstacle to their career development.

What impact, if any, has AI had on your career progression?

These results suggest that AI is still seen as a tool and not a differentiator. But there is reason to believe that perception is starting to shift.

Why AI usage still lags in the legal field

Despite strong usage of AI, adoption remains uneven. AI usage in the legal field still hasn’t reached the popularity it has gained in other industries.

The top barrier to broader AI adoption isn’t ambition. It’s risk.

Top blockers include:

- Accuracy and hallucination concerns

- Security and confidentiality risks

- Regulatory and compliance uncertainty

- Integration challenges and unclear ROI

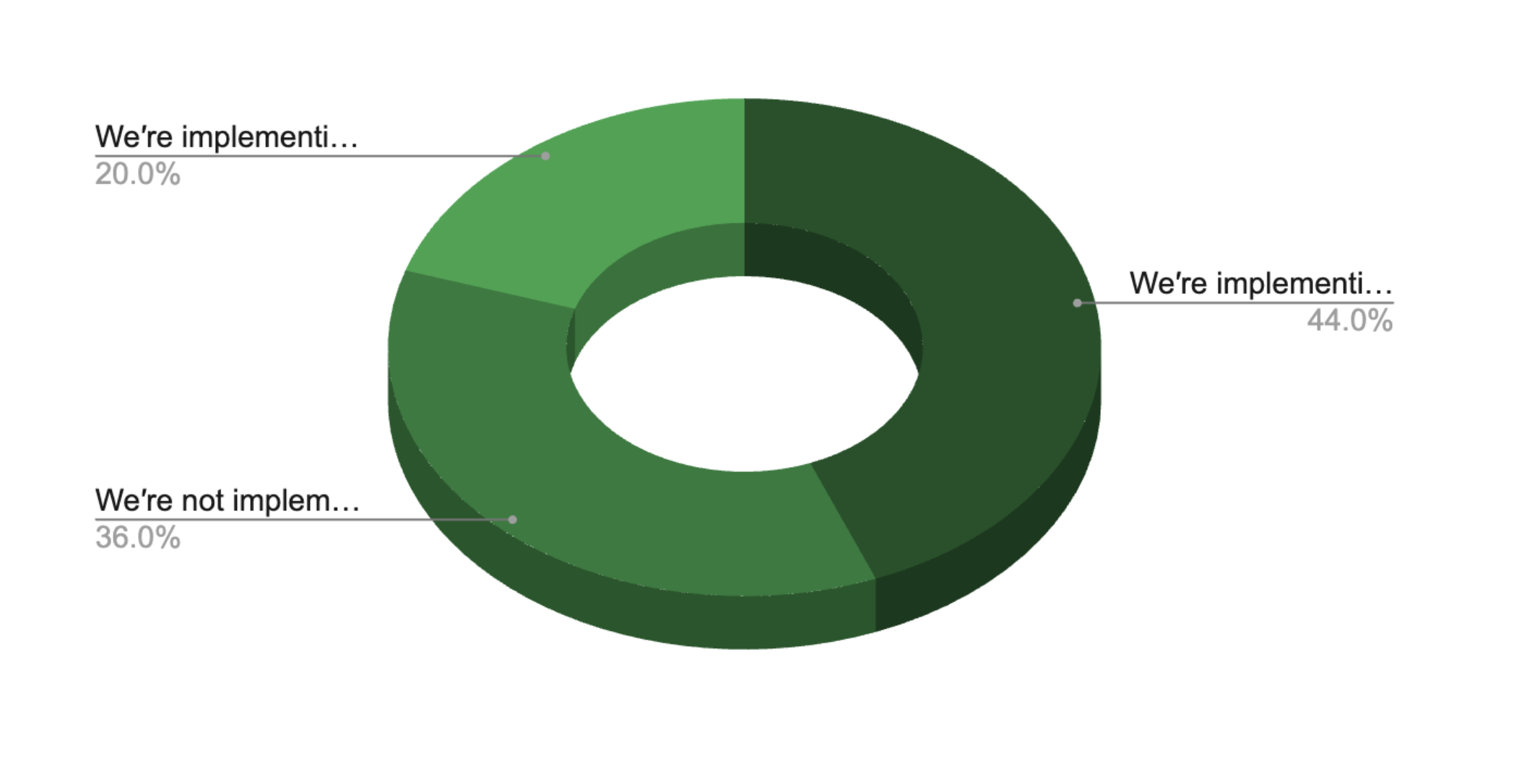

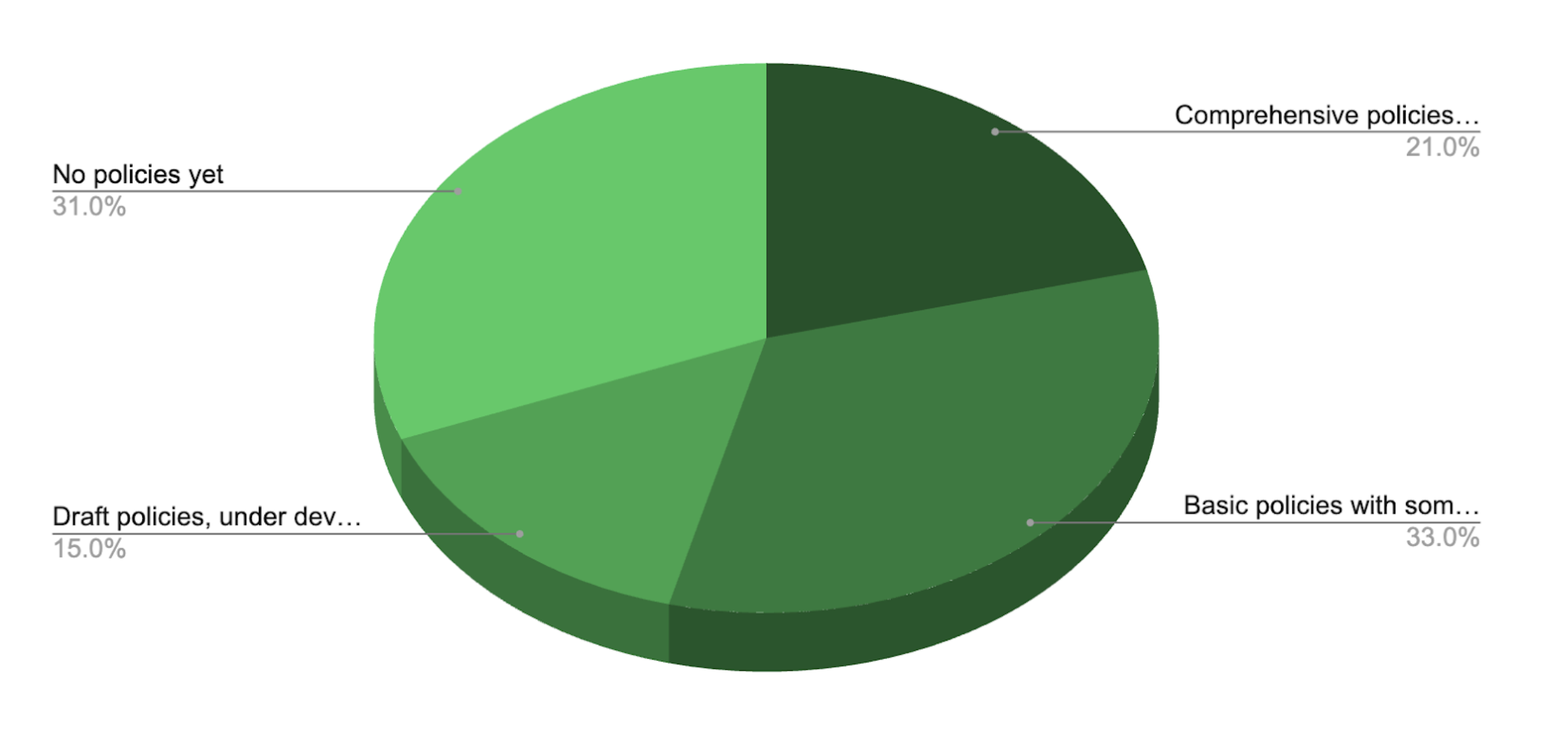

AI Use Policies

Critically, the majority of organizations surveyed do not have comprehensive AI use policies. While usage is high, many lack guardrails protecting the organization and their data.

What type of AI usage policies are in place at your organization?

The American Bar Association has made expectations clear. ABA Formal Opinion 512 states:

Managerial lawyers must establish clear policies regarding the law firm’s permissible use of GAI [generative artificial intelligence], and supervisory lawyers must make reasonable efforts to ensure that the firm’s lawyers and nonlawyers comply with their professional obligations when using GAI tools.

In other words, AI use in law is no longer just a matter of individual judgment. It is an organizational responsibility.

Without clear policies and enforcement, legal professionals are left to navigate AI on their own. This increases the risk of:

- Using unvetted or inappropriate tools.

- Exposing confidential or client data.

- Relying on inaccurate or fabricated outputs.

In mid-2023, a lawyer suing an airline company was caught using fictional case citations in a filed brief. He explained that he used ChatGPT for his research.

Since then, legal AI scholar Damien Charlotin has tracked over 750 instances where lawyers were reprimanded for fake citations or other hallucinations generated by AI.

These highly publicized AI “hallucination” scandals have shown what happens when guardrails are missing: reputational damage, judicial sanctions, and loss of professional credibility.

These public mishaps are also a likely source of AI mistrust within the industry.

CHAPTER 3

The Intelligence Fragmentation Problem

Why trust in AI still lags

Our research shows that legal professionals are using AI, but remain deeply concerned about accuracy and security.

At the same time, respondents reported that the tools they rely on are highly fragmented and lack integration.

This sets up a fundamental obstacle: AI intelligence is only as good as the data it can analyze. When intelligence is scattered:

- AI sees only part of the case.

- Outputs lack context and continuity.

- Lawyers must manually verify results.

- Confidence plateaus—or declines.

One reason legal professionals don’t trust AI is that fragmented tools limit accuracy and context, which erodes user confidence.

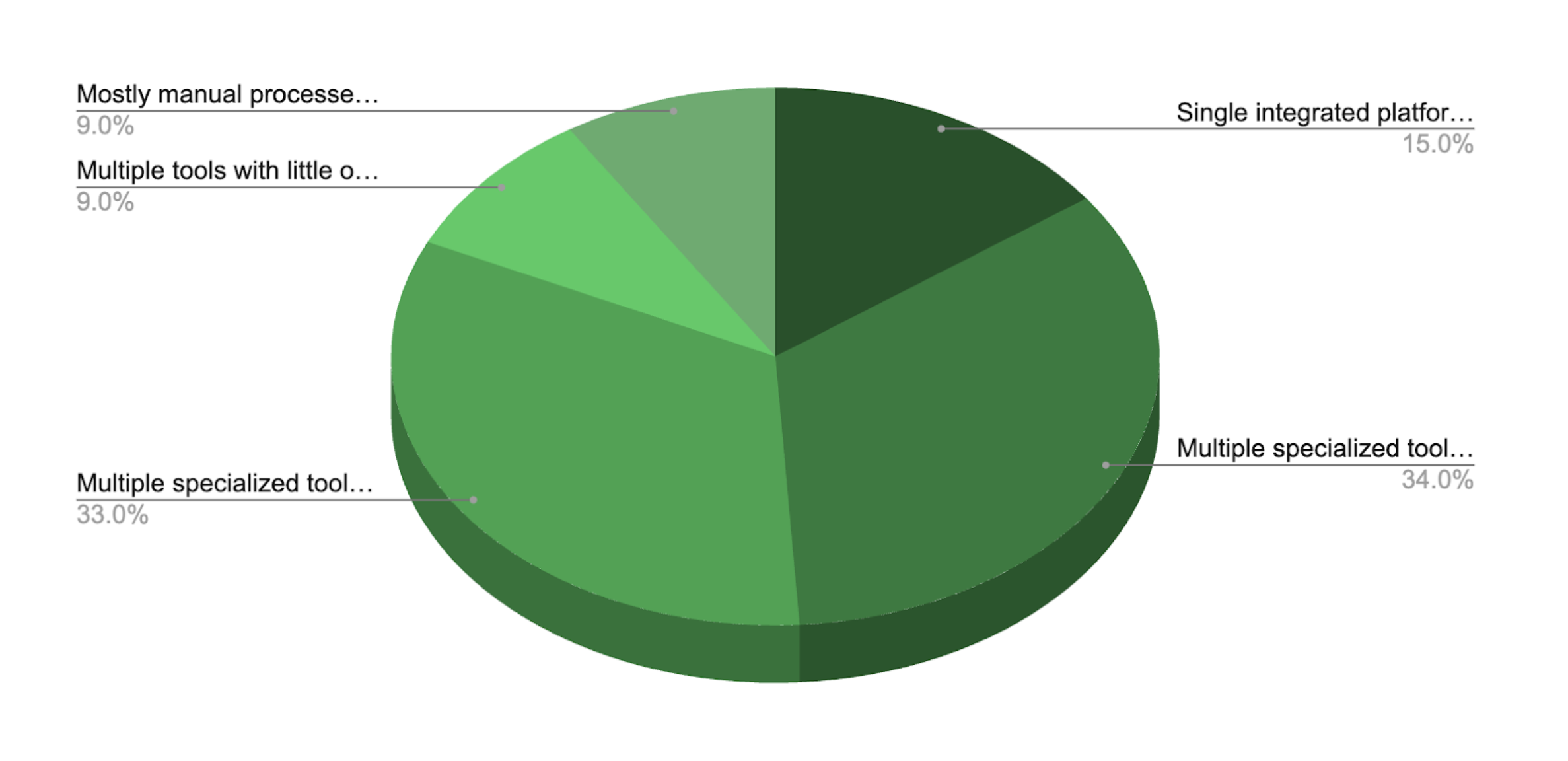

Most legal teams rely on disconnected tools

According to our survey, most legal organizations are not operating from a unified system of record.

Over half of legal teams report limited integration, and only 15% operate from a truly integrated platform.

What describes your organization’s legal technology set-up?

Reliance on siloed tools explains why AI confidence remains tentative, even as usage increases.

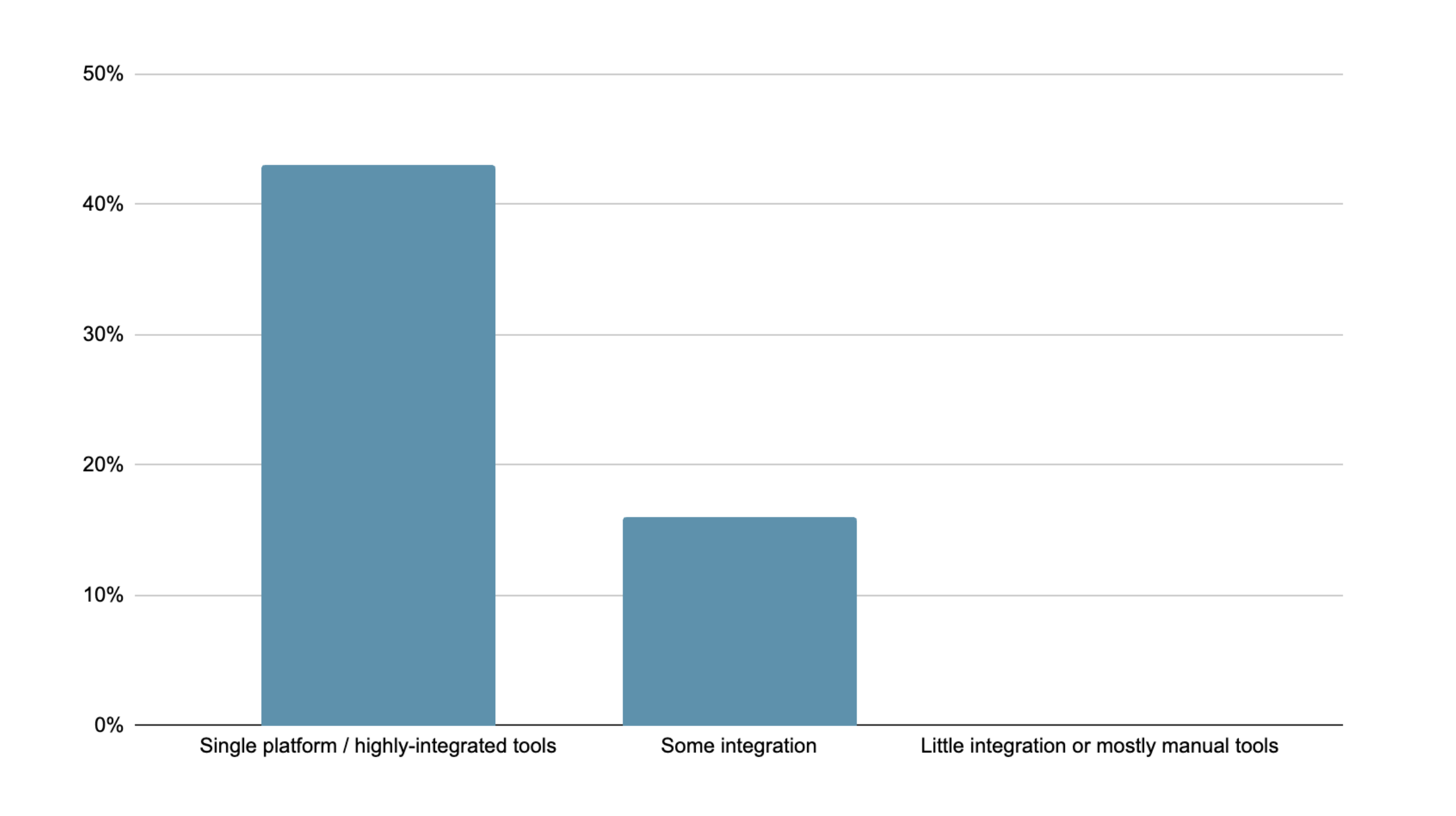

Disparate systems are a barrier to trust

Our survey shows a correlation between technological integration and trust in AI. Those who use highly integrated tools are more likely to express confidence in the accuracy of outcomes.

High confidence in AI accuracy, by level of integration

This finding makes sense. AI can’t be trusted to reason across a case when the case itself is disconnected.

Legal professionals know integration is the missing link

This trust gap isn’t theoretical: it’s reflected directly in what respondents say they need.

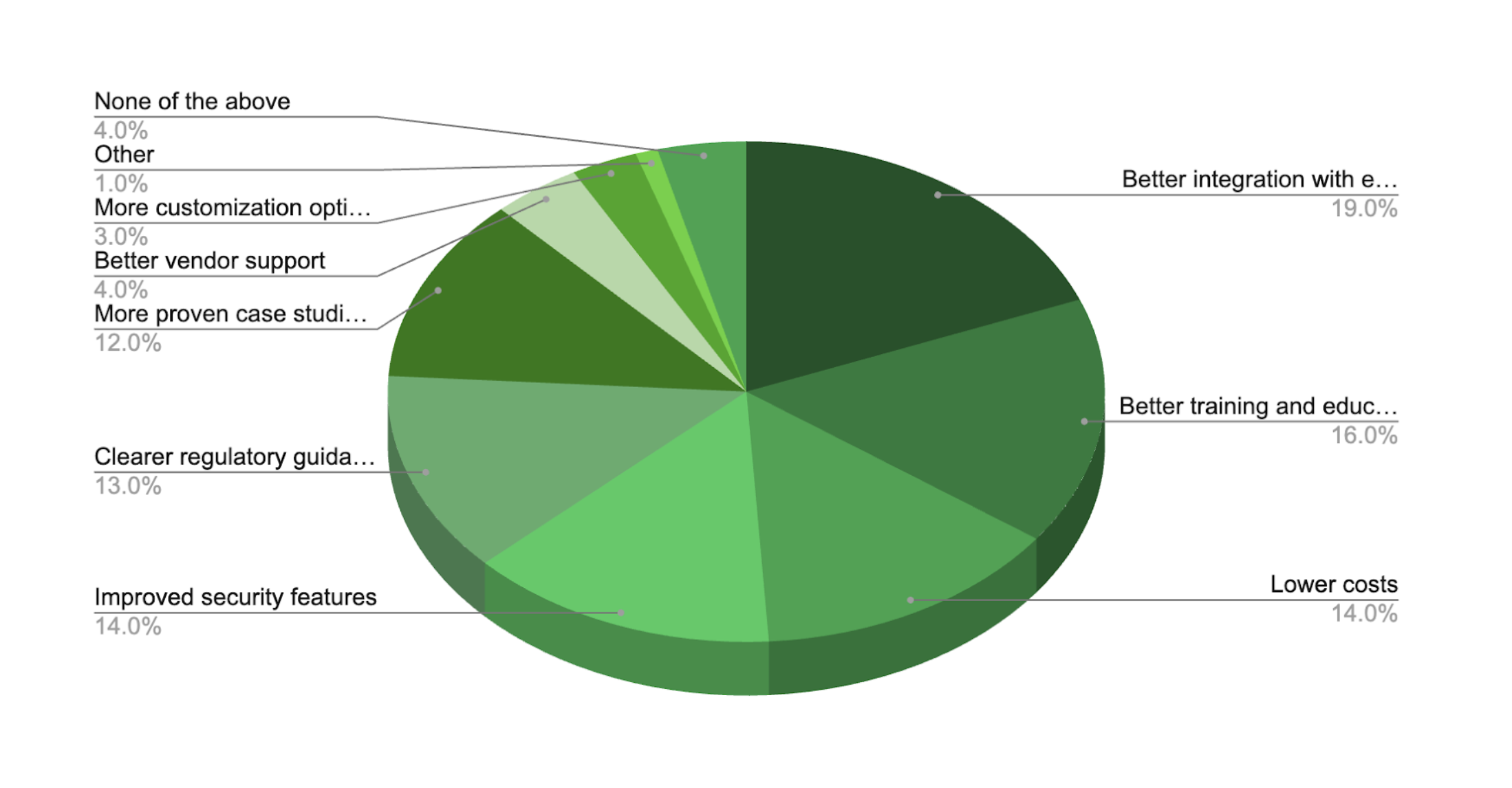

When asked what would most accelerate AI adoption, better integration ranks higher than cost, security, and compliance.

Which of the following would most help accelerate AI adoption at your organization?

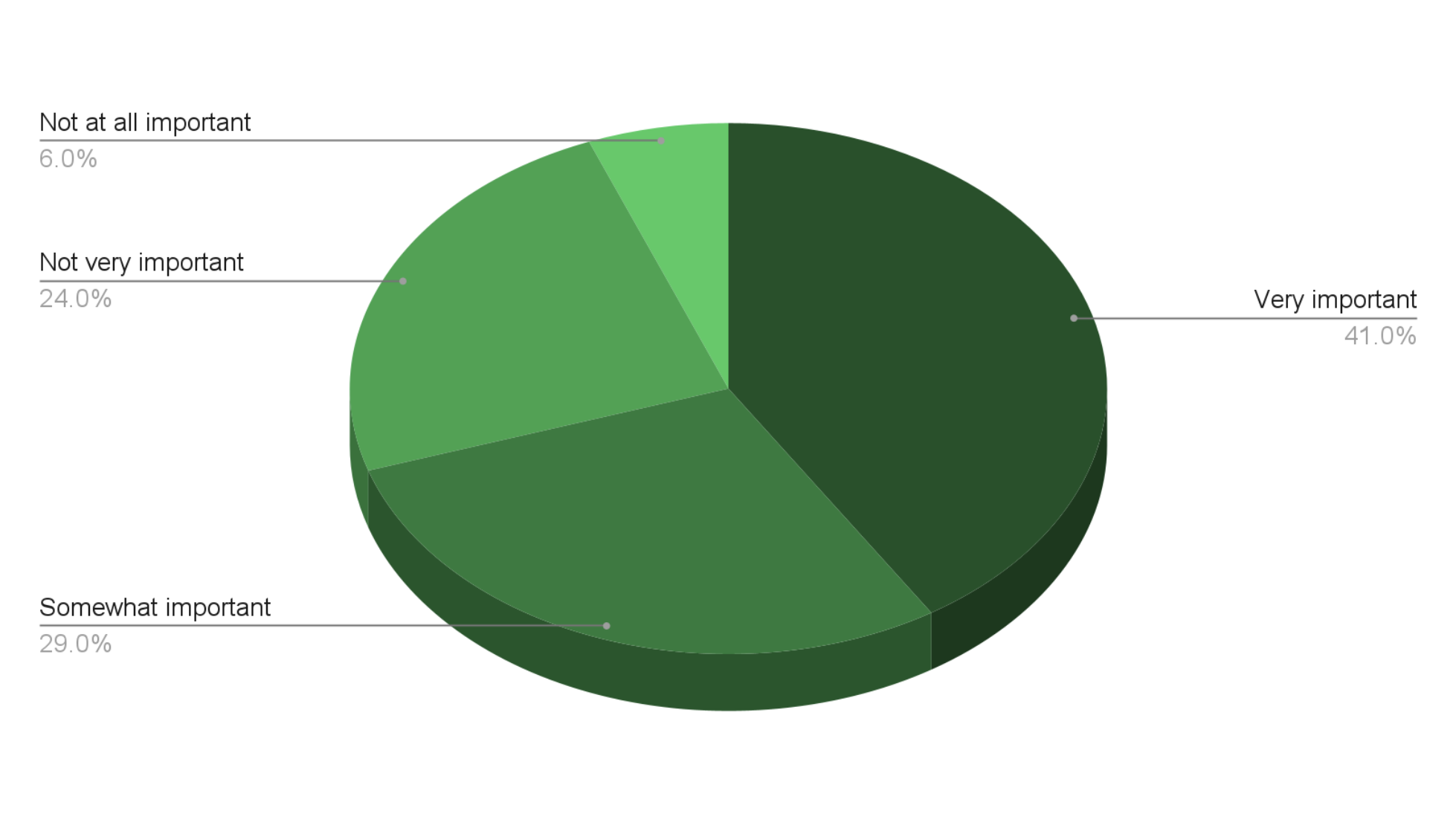

When asked directly about integration, the vast majority say it’s important to have AI capabilities integrated across all their legal tech tools, rather than as separate, standalone applications.

Importance of integrated AI across legal tools

And yet for most legal professionals, AI tools are often bolted on, creating a patchwork system. This is the root of the trust problem.

Fragmented systems create fragmented intelligence.Fragmented intelligence creates uncertainty.Uncertainty prevents trust.

Until legal data, documents, and decisions are connected, AI will remain:

- Helpful—but limited

- Powerful—but risky

- Used cautiously instead of confidently

The issue boils down to the fact that legal work has outgrown traditional case management.

A modern legal matter is not a single dataset or document. It’s thousands of interconnected decisions unfolding over time.

A case today includes:

- Deadlines

- Medical records

- Evidence

- Negotiations

- Correspondence

- Legal strategy

- Workflows

- Outcomes

- Precedents

- Financials

- Risk signals

Most law firms and legal teams are making critical decisions without connected intelligence. For example, matter management and document management should not be perceived as requiring different tools or technology. Instead they should be connected on a single platform with a unified intelligence layer. This approach will mitigate:

- Conflicting data

- Out-of-sync documents

- Opaque workflows

- Lost context

- Inconsistent decisions across the firm

- No ability to forecast outcomes or risk

- No reliable foundation for AI to operate safely or accurately

Continuing to work in siloed tools creates operational blind spots. When case data is spread across systems, firms make decisions based on partial information, manually stitch together their data, and operate blind to bottlenecks and risks. They are forced to rely fully on human memory rather than system insight.

CHAPTER 4

The Future of Intelligent Legal Operations

Legal teams have moved past the question of whether to use AI.It’s a question of who will gain the AI advantage, using it safely, at scale, and to its fullest potential.

The next phase of legal AI will not be driven by larger models or broader knowledge.It will be driven by better intelligence. That intelligence will be fed by high-quality data, properly nested in context, and grounded in truth.

As AI becomes ubiquitous across the legal industry, differentiation will no longer come from simply having access to AI tools. It will come from how effectively firms and legal departments harness AI—how deeply it is embedded in their operations, how well it understands their data, and how confidently it can be trusted to support real decisions.

High-trust legal AI is:

- Grounded in firm documents and matter data.

- Aware of case history and workflow context.

- Aligned with internal standards and policies.

- Designed to support real legal decisions, not generic answers.

The data in this report points to a clear conclusion: trust-enabled, data-grounded AI is becoming a competitive necessity.

Introducing Legal Operating Intelligence

Legal teams are sitting on a gold mine of quality, contextualized data.However, they lack connected intelligence.

A Legal Operating Intelligence System represents an evolution beyond general-purpose AI. It establishes a unified intelligence layer that:

- Connects data, documents, and decisions

- Preserves context across the full life of a matter

- Serves as the foundation for trustworthy, governable AI

In this model, AI doesn’t sit on top of legal work. It runs through it.

The competitive advantage of Legal Operating Intelligence

When AI is grounded in a unified legal data layer, it can:

- Use firm-owned data as its primary source of intelligence.

- Reason across timelines, workflows, and outcomes as connected elements.

- Learn from operational patterns across matters and teams.

- Reduce risk while increasing confidence in AI-supported decisions.

This shifts AI from a speculative assistant to a dependable operational capability.

What this means for the legal industry

AI adoption is a clear competitive advantage, but having trust in your system is non-negotiable.

As AI becomes ubiquitous, competitive advantage will shift away from who uses AI to who uses it well. The firms that pull ahead will be the ones that:

- Solve intelligence fragmentation

- Enable AI to operate with full context, not partial inputs

- Turn AI from a helper into a dependable system capability

These tools will also be legal-specific rather than general-purpose.

General-purpose AI is trained on broad, public data. Legal professionals, however, trust their own data.

Strategic implications

High-trust AI reshapes more than workflows. It can change how entire firms operate. Benefits include:

- Competitive differentiation: faster, more consistent outcomes.

- Talent development: accelerated learning and better leverage of senior expertise.

- Client confidence: greater transparency, predictability, and reliability.

- Risk management: stronger oversight aligned with ethical and regulatory expectations.

The future of law belongs to firms that build AI on a unified, trustworthy foundation.

AI and the future of the legal workforce

An additional competitive advantage of intelligent legal operations is attracting and retaining talent.

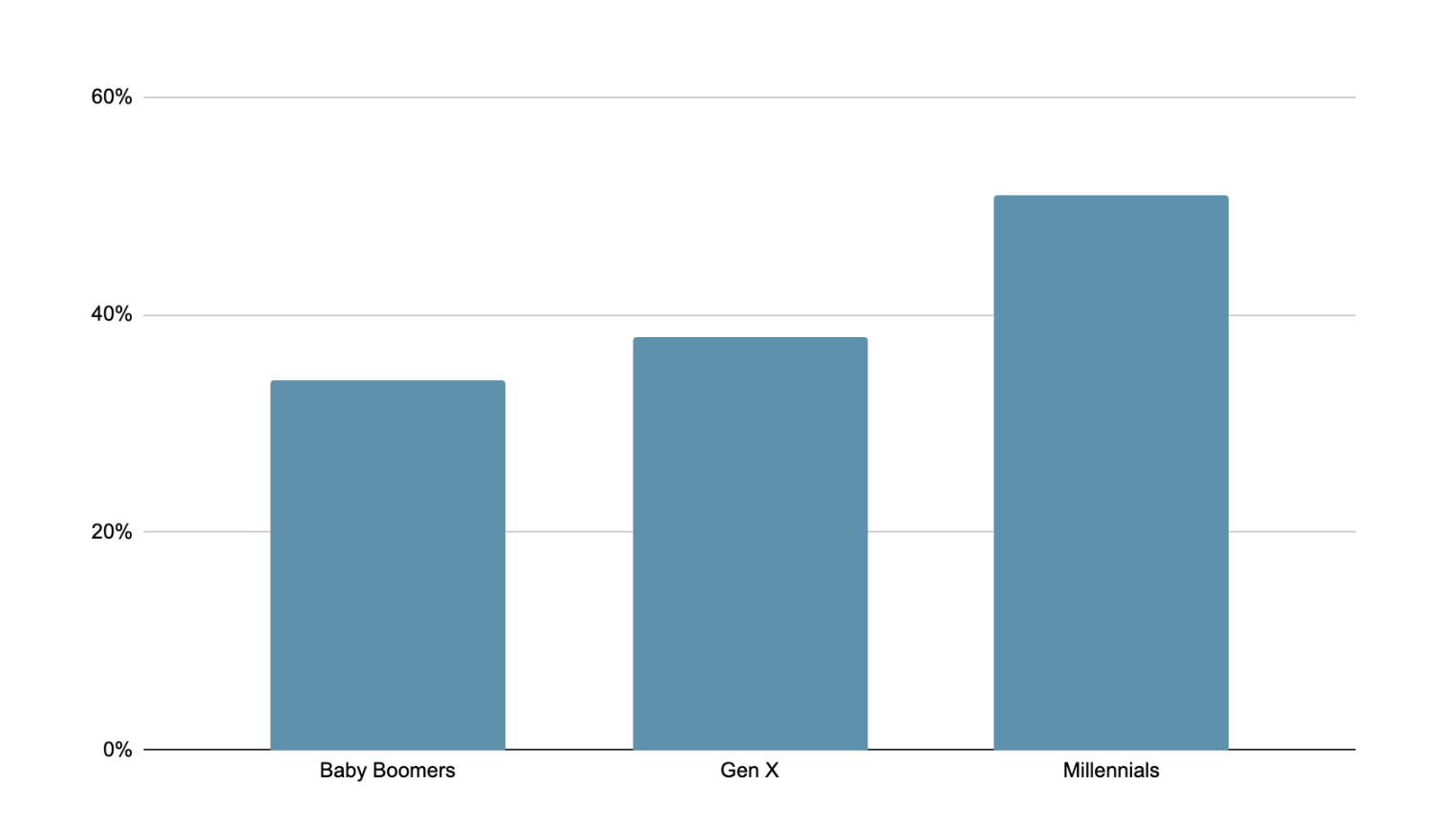

The survey’s generational data is clear. Younger legal professionals use AI more frequently, report higher proficiency, and experience greater productivity gains than their senior counterparts. Millennials and Gen X professionals are already integrating AI deeply into their daily work, while Baby Boomers remain more cautious and less frequent users.

Younger legal professionals are integrating AI more deeply into daily work. Only about a third of Baby Boomers rely on AI throughout the day, compared to over half of Millennials.

Percentage using AI multiple times a day:

Younger respondents also report higher confidence in AI, and greater increases in confidence over the last year.

The future legal workforce expects modern tools, connected systems, and intelligent support. For them, fragmented technology stacks and manual workarounds are signals that an organization is falling behind. Firms that fail to modernize risk losing their ability to attract, retain, and develop top talent.

Winning in the next era of legal operations

Our research suggests that the future of legal operations belongs to organizations that recognize AI as a capability that must be built on a trustworthy, unified foundation.

Competitive firms will be the ones that:

- Attract and retain the next generation of legal talent.

- Turn fragmented data into connected intelligence.

- Enable AI to operate with full context and confidence.

- Move beyond experimentation to scalable, trusted adoption.

In this next era, AI is not optional—but trust determines who wins. Firms that ground AI in their own data and connect intelligence across their operations are likely to define the future of legal work.

Conclusion: Building AI Lawyers Can Trust

Throughout this report, one message is clear: legal professionals don’t distrust AI. They distrust disconnected intelligence.

The data shows that trust grows when AI runs on cohesive, connected legal intelligence. When AI operates on partial inputs, siloed systems, or generic data, it feels risky and requires constant verification. When it is grounded in firm-specific data, aware of context, and connected across documents, workflows, and decisions, it becomes something legal professionals can rely on.

This distinction matters because the future of legal AI is not general-purpose. It is firm-specific and data-grounded. Legal professionals trust AI that understands their matters, their documents, their standards, and their workflows—not tools trained broadly and layered onto point solutions.

This is the problem LOIS by Filevine was built to solve.

LOIS is the Legal Operating Intelligence System. It unifies data, documents, workflows, and decisions into a single intelligence layer, giving AI the full context legal work demands. Instead of operating as a standalone tool, LOIS runs through the system of record. It’s grounded in firm-owned data, aware of timelines and workflows, and governed by built-in controls.

The result is a different kind of AI:

- Operational, not experimental.

- Contextual, not generic.

- Governable, auditable, and built for real legal decisions.

As AI becomes imperative in legal work, trust will determine impact. Organizations that continue to layer AI on top of fragmented systems will struggle to move beyond cautious experimentation. Those that adopt Legal Operating Intelligence will unlock confident, scalable AI, without compromising accuracy, security, or professional responsibility.

About Filevine

Filevine is the leading legal work platform for modern law firms and legal teams. Built to support the full lifecycle of legal work, Filevine brings together cases, documents, workflows, communication, and data in one connected system—helping legal teams operate with greater clarity, efficiency, and confidence.

At the core of Filevine’s platform is LOIS (Legal Operating Intelligence System). LOIS is Filevine’s unified intelligence layer, designed specifically for the realities of legal work. It connects firm-owned data, documents, timelines, and workflows into a single source of truth, providing the foundation for AI that is contextual, case-specific, and governable.

Together, Filevine and LOIS enable legal teams to move beyond fragmented systems and cautious experimentation and toward trusted, intelligent legal operations at scale.

To learn more schedule a demo